Sorry, this activity is currently hidden

Section outline

-

Introduction

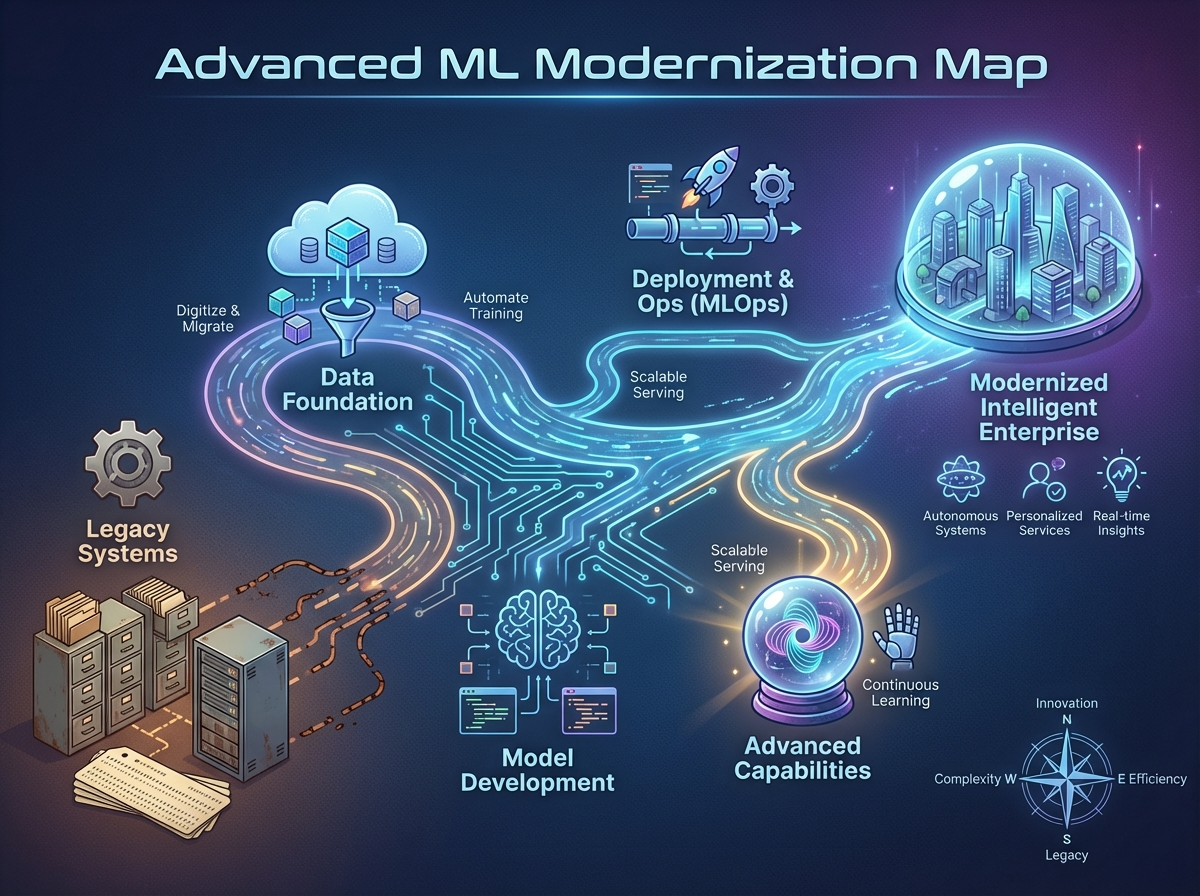

This section maps how classical ML practice has evolved, clarifying when modern representation learning helps and when well-tuned classical models remain the best choice. You’ll focus on data-regime tradeoffs and the practical risks that matter most in classical ML deployments, especially distribution shift and drift. The section also updates evaluation habits by moving beyond accuracy toward calibration and uncertainty-aware decision-making.

Learning Objectives

-

Distinguish feature engineering vs. representation learning and identify when each approach is appropriate in classical ML settings.

-

Analyze data-regime tradeoffs to justify when classical models can outperform modern alternatives.

-

Diagnose key modern ML failure modes (distribution shift and drift) and select evaluation metrics that capture calibration and uncertainty.

-

-

Introduction

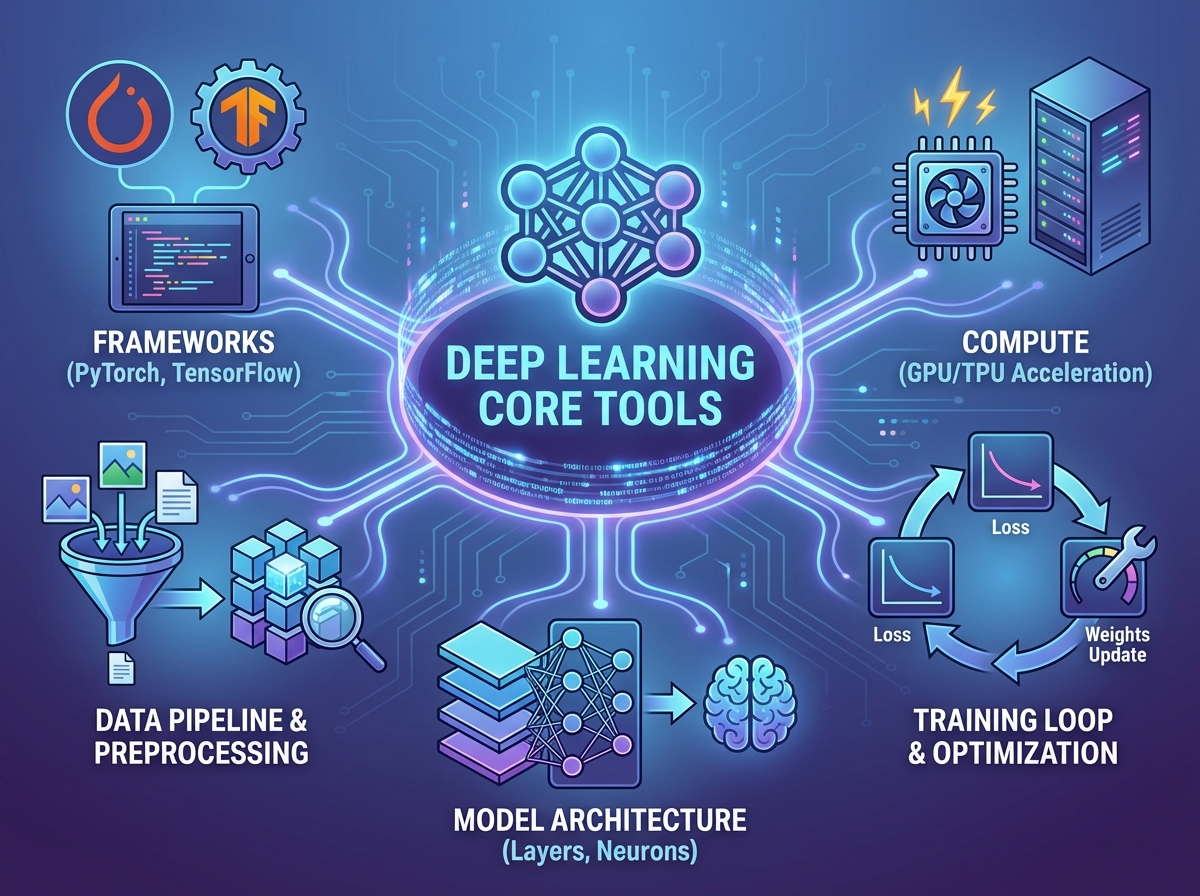

This section equips you with the practical deep learning toolkit needed to complement and extend classical ML workflows. You’ll focus on core inductive biases, optimization habits, and generalization controls that determine when neural models outperform or underperform strong classical baselines. The goal is to make deep learning behavior predictable enough to integrate into classical-ML-centric evaluation and modeling decisions.

Learning Objectives

-

Differentiate key inductive biases (convolution, attention, recurrence) and select an appropriate architecture family for a given data structure.

-

Apply practical optimization techniques (initialization, regularization, and learning-rate schedules) to stabilize training and improve convergence.

-

Use generalization and scaling levers (augmentation, early stopping, dropout, batch size/throughput) to improve performance under fixed compute budgets.

-

-

Introduction

This section consolidates the advanced concepts from the course into a coherent mental model you can apply to Classical ML workflows. You’ll connect modernization tradeoffs, evaluation rigor, and deep learning tooling back to practical decisions in classical settings. The goal is to leave with a clear checklist for choosing, validating, and maintaining models in real deployments.

Learning Objectives

-

Synthesize key ideas across modernization, evaluation, and deep learning tools into a unified decision framework.

-

Diagnose common failure modes (shift/drift, miscalibration) and select appropriate evaluation signals to monitor them.

-

Define a focused next-steps plan for continued learning aligned to Classical ML practice.

-