Section outline

-

Introduction

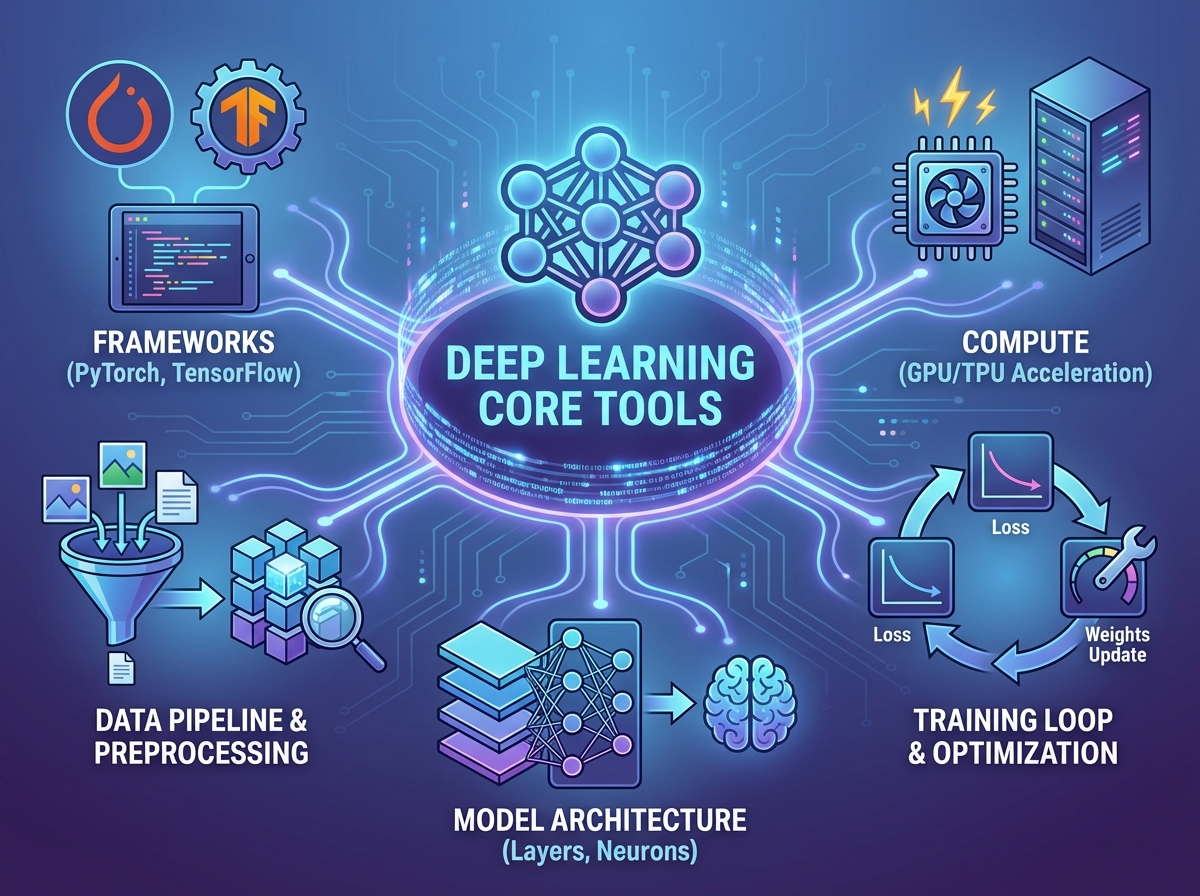

This section equips you with the practical deep learning toolkit needed to complement and extend classical ML workflows. You’ll focus on core inductive biases, optimization habits, and generalization controls that determine when neural models outperform or underperform strong classical baselines. The goal is to make deep learning behavior predictable enough to integrate into classical-ML-centric evaluation and modeling decisions.

Learning Objectives

-

Differentiate key inductive biases (convolution, attention, recurrence) and select an appropriate architecture family for a given data structure.

-

Apply practical optimization techniques (initialization, regularization, and learning-rate schedules) to stabilize training and improve convergence.

-

Use generalization and scaling levers (augmentation, early stopping, dropout, batch size/throughput) to improve performance under fixed compute budgets.

-